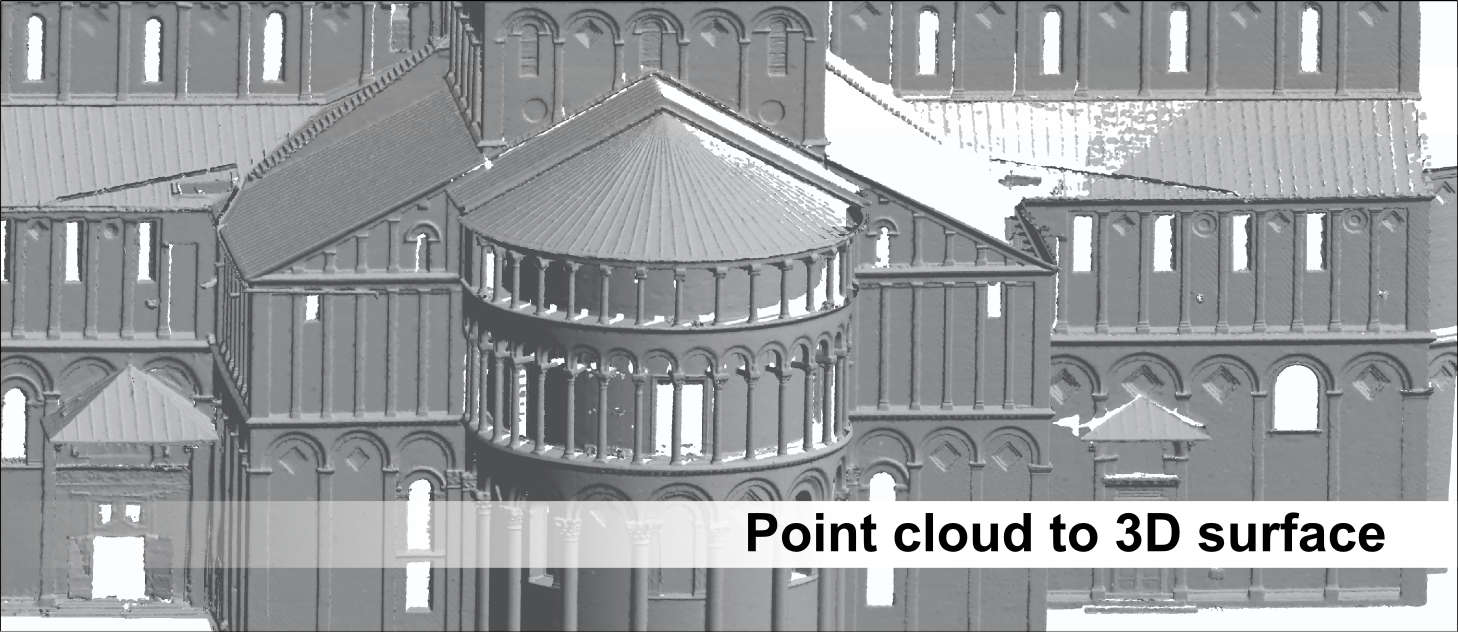

Thera are actually some points that I would like to share. MeshLab reads the project file and imports all the point cloud and aligns them together automatically. In this MeshLab project file, each point cloud is associated with a transformation based on their left camera poses. After all the camera locations are obtained, a MeshLab project file is composed. Successive camera locations are calculated with respect to this origin. The location of the very first stereo image pair is taken as the global origin. Once the relative pose is obtained, we could take Image 1 as Image 0 and repeat the above process with the next camera location. We could use SolvePnPRansac() to compute the relative camera pose of Image 1 with respect to Image 0. At this moment, we have the 2D feature points in Image 0, and their corresponding 3D counterparts defined in the reference frame of Image 1. Then the 3D coordinates of these feature points are calculated. The depths are found for the matched feature points in Image 1. Let’s define the left image of the previous location as Image 0 and Image 1 for the new camera location. Compose MeshLab project file, importing the point clouds and align them with each other.įollowing the instructions of OpenCV, I was using SURF feature points and the FANN matcher.Repeat the above processes until all the camera locations are processed.Calculate the relative camera pose between the two camera locations.Compute and match the feature points in those images. Take the left images of the current and previous camera location.Reproject the depth information to 3D space forming point cloud.

Perform stereo reconstruction at the new camera location.Thanks to OpenCV, it has everything we need to accomplish this task. After aligning the images, point clouds, which are obtained by reprojecting depth information into 3D space, are aligned in MeshLab. The camera is under constant movement, we will use the left images at different camera poses to try to get an alignment. The depth is calculated with respect to the left image. The left image of the custom stereo camera is taken as the base image. After a painful tuning process, it finally worked. It might be difficult to match the feature points. At first, we thought it might not work since we are taking pictures of low texture and random texture objectives. Since it is a stereo camera, depth information is right at my disposal, things seem so straightforward. In the beginning, I thought I could just utilize the conventional method, such as matching the feature points between two consecutive images. These days I was trying to align multiple images obtained by a custom stereo camera. It contains all the information about translations, rotations and scale applied to each mesh.Things get easier when depth information is available. You want the matrix between MLMatrix44 tags. Each mesh in the file will have the filename of input mesh and a matrix like this: This mean that you can open it with any text editor (sometimes you want to change the extension to. This will create a file with extension ".mlp" that is in XML format. The easiest way to see the matrix is to use the "File -> Save Project" menu option. You want to see the values of the current transformation matrix for each one of the meshes.įirst of all, avoid to use the filter or mark the flag named "Freeze current matrix" while you align your meshes, because this will apply the transformation to the vertex coordinates and reset the matrix to unit, so you loose it.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed